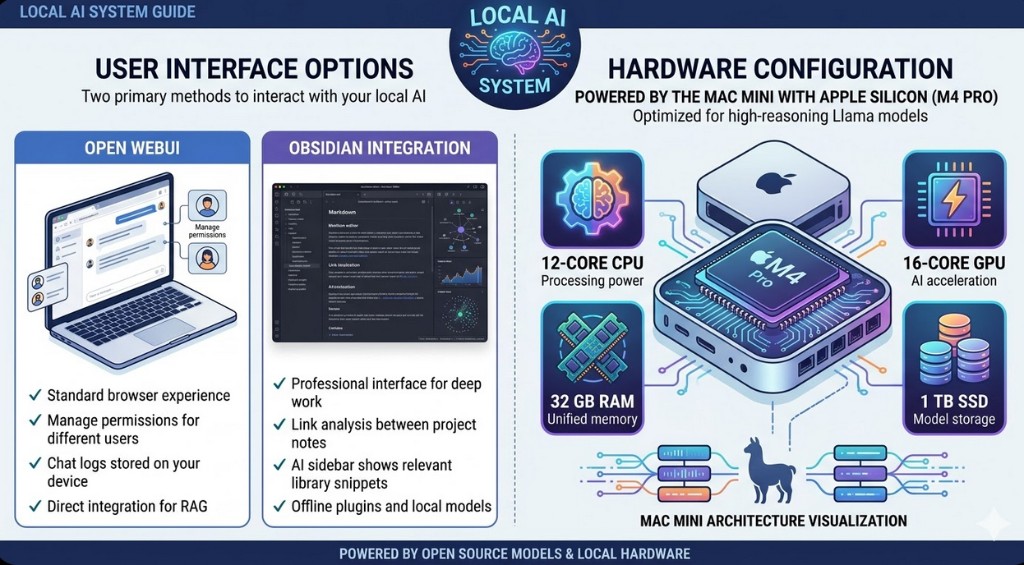

Hardware configuration.

Powered by the Mac Mini with Apple Silicon (M4 Pro). Optimized for high-reasoning Llama models and local RAG workloads.

Ready to run Local AI on your own hardware?

We size and install the stack—Mac Mini, Linux, or custom. Request a proposal or book a call to scope your setup.

Why this hardware?

Apple Silicon gives strong performance per watt for inference. The Mac Mini fits in an office or server closet, stays quiet, and avoids cloud lock-in. We size and recommend hardware based on your team size and model mix—contact us for a tailored setup.

About custom hardware.

The Mac Mini is our reference build, but your setup may need something different: more seats, heavier models, or existing servers. We can spec and guide custom hardware—bigger Apple Silicon machines, Linux workstations, or headless servers that run Ollama and your RAG stack.

- Larger Apple Silicon (Mac Studio, Mac Pro) for higher concurrency and bigger models.

- Framework Desktop—compact 4.5L AMD Ryzen AI Max system (up to 128GB LPDDR5x unified memory) with very strong iGPU throughput for local AI.

- Minisforum G7 series and Framework Laptop 16 (Max 395) are strong AMD Ryzen AI 300/Strix Halo options for Linux or Windows deployments.

- Linux workstations or servers with NVIDIA/AMD GPUs for maximum throughput.

- Existing on‑prem hardware—we help you size and install the Local AI stack on what you already have.

Framework Desktop note.

Framework Desktop is a compact desktop platform built around AMD Ryzen AI Max chips (including Ryzen AI Max+ 395 options) with high-bandwidth LPDDR5x unified memory configurations up to 128GB. For local AI, this is especially useful when you want strong integrated GPU performance without a large tower build. It is also a good fit if you prioritize modular, serviceable hardware and long-term repairability.

Linux setup and alternatives.

The same stack runs on Linux: Ollama, Open WebUI, and local RAG work on Ubuntu, Debian, and other distros. Ideal for headless servers, rack mounts, or GPU boxes where you don’t need a desktop.

- Ollama and Open WebUI install and run natively on Linux; we provide setup and hardening steps.

- Headless or SSH-only deployments—no display required; access everything via browser from your network.

- Docker and systemd options for service management, updates, and restarts.