Local AI infrastructure for your organization.

Run AI models and RAG locally on a Mac Mini or AMD Ryzen AI 300 (Strix Halo) hardware instead of in a public cloud. Data sovereignty on your own hardware, inside your own network. No per‑user subscriptions; predictable, on‑prem costs. The same setup can be hosted on a server instead of a Mac Mini if that fits your environment better.

Obsidian knowledge management guide

Want the full breakdown of how Obsidian works with Local AI—architecture, plugins, and pricing? Read the detailed guide.

Obsidian for Local AIHugging Face models

Use Hugging Face models in your Local AI stack with Ollama or other runtimes.

Hugging Face pageCore value proposition.

Data sovereignty.

Proprietary data stays on your physical hardware. Conversations and documents remain local. No third-party data transfers.

Cost management.

No monthly per-user subscription fees. System pays for itself in roughly 27 months. Hardware remains a company asset.

Local RAG.

Private knowledge base indexing. Reads your internal PDFs and spreadsheets. Answers questions based on your specific data.

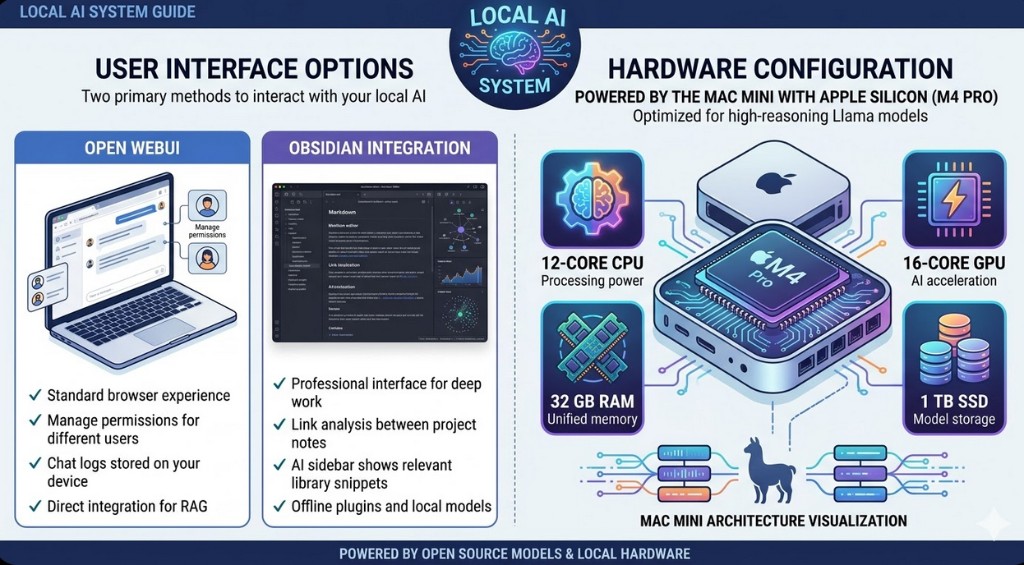

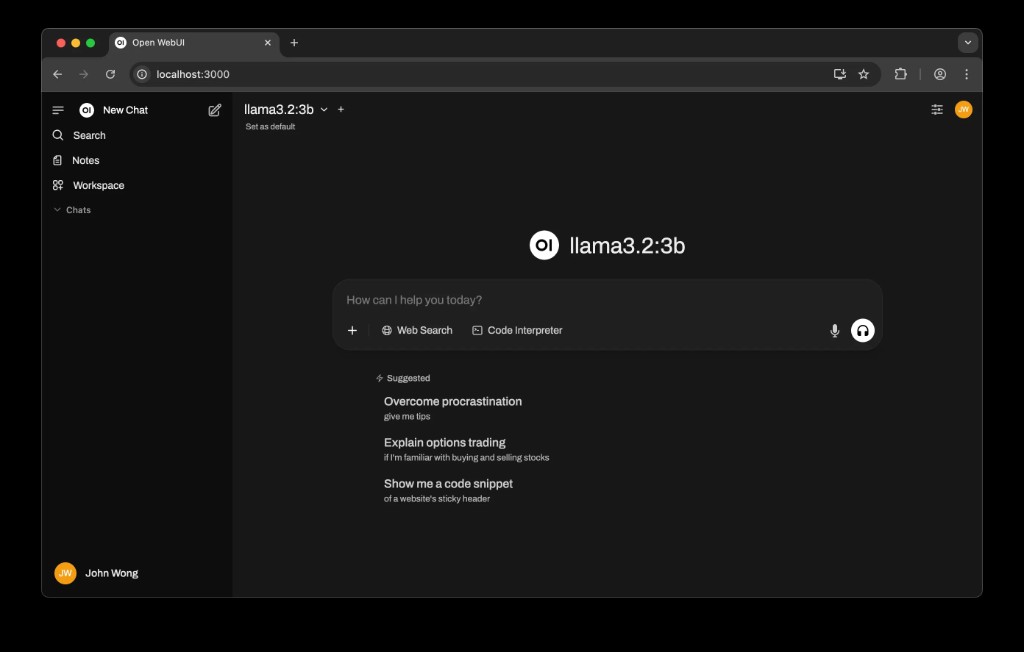

User interface options.

Two primary methods to interact with your local AI.

Open WebUI.

- ✓ Standard browser experience.

- ✓ Manage permissions for different users.

- ✓ Chat logs stored on your device.

- ✓ Direct integration for RAG.

Obsidian integration.

- ✓ Professional interface for deep work.

- ✓ Link analysis between project notes.

- ✓ AI sidebar shows relevant library snippets.

- ✓ Offline plugins and local models.

Hardware configuration.

Powered by the Mac Mini with Apple Silicon (M4 Pro). Optimized for high-reasoning Llama models.

High-performance Linux/Windows alternative.

Hardware option: Minisforum G7 series or Framework Laptop 16 (Max 395 configuration), based on AMD Ryzen AI 300 series with Strix Halo architecture. Recommended baseline: 64 GB LPDDR5x memory and 1 TB NVMe SSD. This option delivers massive integrated GPU performance for Linux or Windows environments, while Framework hardware adds strong modularity and repairability.

3‑year financial comparison.

| Category | Local AI (Mac Mini) | Local AI (Ryzen AI 300 / Strix Halo) | Cloud subscription |

|---|---|---|---|

| Hardware purchase | $1,599 (one‑time) | $1,999+ (one‑time) | $0 |

| Implementation labor | $2,500 (one‑time) | $2,500 (one‑time) | $0 |

| Monthly subscription | $0 | $0 | $150 ($30 / user / mo) |

| Total cost (36 months) | $4,099 | $4,499+ | $5,400 |

| Data privacy risk | Zero | Zero | Variable |

| Internet required | No | No | Yes |

Ryzen AI 300 / Strix Halo pricing depends on selected platform and memory tier (for example Minisforum G7 series, Framework Laptop 16 Max 395, or Framework Desktop).

Implementation services.

Professional services billed at $300 per hour.

GDPR and data protection.

Data residency.

All personal data processed by the AI remains on‑premises. There is no “transfer to third countries” as defined under GDPR. The data never leaves your physical control or jurisdiction.

Right to erasure.

Local vector databases allow complete deletion of files. Deleting a file removes it from the AI knowledge base immediately.

No training.

Your intellectual property is never used to train third‑party models. You maintain total control over your organizational data.